Storytellers Rule the World

A UCL professor told BBC that “in political speeches, metaphors are chosen ‘purposefully and really consciously to try to make people perceive a situation in a certain kind of way.”

Think about the COVID-19 pandemic, when wartime metaphors like doctors “fighting” on the “frontlines” helped paint medical professionals as “brave” and encouraged everyone to honor their “sacrifice” through nightly applause from balconies.

Metaphors and other carefully chosen words are also used to shape the narrative of AI. Because AI is inconsistently adopted, tech leaders must first rely on telling and not showing to convince us to integrate it into our everyday lives. They’re marketing a product, and they need to sell it to us in order to pay off the immense financial investments they’ve made in AI’s success.

They push sensationalist and future-oriented vocabulary to make AI seem desirable, but that language often fails to align with lived experience, producing anxiety, skepticism, and resistance. This is an exploration of how they use language to engineer AI’s narrative and how that directs its future.

The disconnect you’re feeling between how AI is marketed towards you and how you’re experiencing it is not inconsequential, and it’s important to be aware of how language shapes your perception of it as tech leaders readjust their approaches to further convince you to accept AI.

“Big Tech also largely shapes how we think about and perceive AI, as it owns most of the popular AI tools and dominates narratives of AI harms and benefits, both now and in the future, all heavily influenced by profit-making interests and worrisome ideologies.” – Nadia Piet

“The Weapons and Wars Behind the Words“

Before we discuss AI, I want to tell you about Carol Cohn’s 1987 essay titled “Sex and Death in the Rational World of Defense Intellectuals,” because it is an excellent example of how language and metaphors can be used to exert and gatekeep power.

Cohn is an expert on gender in global politics, particularly conflict and security issues. She immersed herself in the world of (male) defense intellectuals, learning their unique language and lingo to deduce how it shapes the nature of their nuclear strategic thinking.

She found their language to be key in structuring how they think and act, and that this language was full of abstractions and euphemisms that distanced them from the realities of nuclear warfare. It was riddled with overtly sexual, domestic, and religious metaphors that functioned to connect masculine sexuality with the arms race, to minimize the seriousness of war, and to claim status and power.

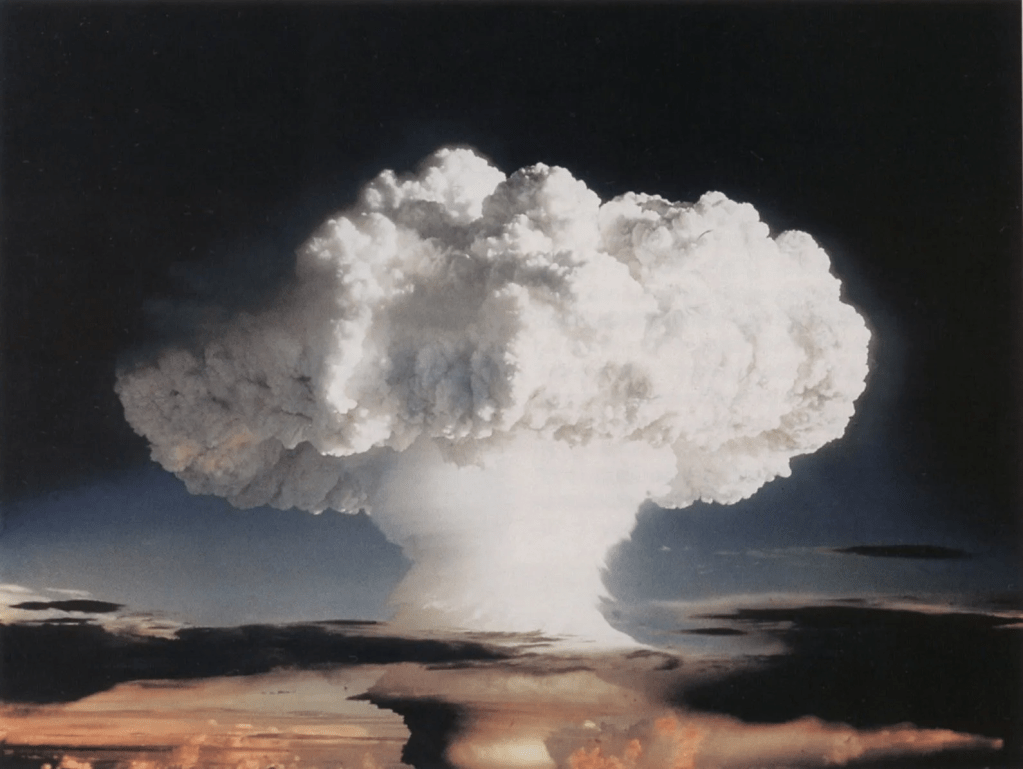

The language’s virginity metaphors, vivid imagery of white plumes, and phallic shapes of nuclear explosions neutralized warfare’s aggressive competition for manhood into mere “boyish mischief.” Domestic imagery like “RVs,” “BAMBI,” “Christmas tree farms” and “cookie cutter” worked to both “humanize insentient weapons” while also making it “all right to ignore sentient human bodies, human lives.” Even religious metaphors like “the nuclear priesthood” functioned to “claim the virtues and supernatural power of the priesthood” and tacitly assert that these defense strategists are the “creators of dogma” and have become godly.

Through these functions of language, nuclear weaponry and deadly technology become something manageable and separate, enabling these seemingly normal individuals to continue doing this type of destructive work while muting ethical repercussions.

Cohn notes that speaking this language is enjoyable: “The words are fun to say; they are racy, sexy, snappy…You can reel off dozens of them in seconds, forgetting about how one might just interfere with the next, not to mention with the lives beneath them.” The process of learning the lingo, she adds, took most of her energy and attention, keeping her away from considering the “weapons and wars behind the words.”

By design, the language offers a feeling of being one of the few people “in the know.” The summer program that granted her access into these spaces was funded and lavish, adding to the feeling of power and exclusivity. She even found that when she refused to speak in these abstractions, the men treated her as if she was ignorant and simpleminded, emphasizing the necessity to view defense strategy through the metaphors.

I was most interested by her realization that learning this language offered a profound sense of control. She writes:

“You can get so good at manipulating the words that it almost feels as though the whole thing is under control. Learning the language gives a sense of what I would call cognitive mastery; the feeling of mastery of technology that is finally not controllable but is instead powerful beyond human comprehension, powerful in a way that stretches and even thrills the imagination. The more conversations I participated in using this language, the less frightened I was of nuclear war.”

Story Architects

TIME Magazine declared “The Architects of AI” as its 2025 Person of the Year and wrote: “AI emerged as arguably the most consequential tool in great-power competition since the advent of nuclear weapons.” Both nuclear defense strategy and AI represent moments of collision between powerful people making unilateral, life-altering decisions, and those who must follow their lead or be left behind.

In both cases, our perception of the technology is not neutral; it’s deeply intertwined with experiences of power, control, and fear. And as we see with Cohn, language plays a huge role in mediating and shaping that experience.

Like nuclear strategy, AI can be rendered manageable and even desirable through language. Tech leaders are both building AI and handing us the words to describe it. As we, the average technology users, become immersed in the vocabulary and promises of AI, we too may become thrilled and less frightened about this potentially humanity-changing technology.

That’s the tech leaders’ hope, at least: to create a vocabulary so snappy and sexy that we can’t help but fall in love with AI (even if the actual product is overpromised and has its own potential dangers). They have to rely on a language that frames AI as inevitable and imperative—the stakes of unfathomable wealth and legacy are too big and just within reach.

The new AI language has successfully permeated our personal and professional lives. The fact that several dictionaries made AI terms like “vibe coding” their 2025 words of the year only enforces their longevity. They’re now part of the official cultural lexicon that everyone is expected to know.

Cohn argues that learning a language is not additive; you don’t just learn new vocabulary and information. She says it’s transformative, and that “when you choose to learn it you enter a new mode of thinking.”

TIME’s article, centered around a profile of NVIDIA CEO Jensen Huang, made the narrative of inevitability and necessity explicit, weaving it into their account of the past, present, and future of AI: “This is the story of how AI changed our world in 2025, in new and exciting and sometimes frightening ways. It is the story of how Huang and other tech titans grabbed the wheel of history, developing technology and making decisions that are reshaping the information landscape, the climate, and our livelihoods…It describes a frantic blitz toward an unknown destination, and the struggle to make sense of it.”

A group of powerful men grabbing the wheel of history and charting unknown territory without consideration for others is a familiar story in American history. Leaders seize what they want in the name of expansion, innovation, and exploration.

The TIME authors’ language evokes powerful metaphors of all-American frontierism—where thrill, hope, and destiny (supposedly) shape a new future for all—while also reminding the careful reader of the extreme consequences that hindsight has revealed from those same historical moments.

The article pens an official account of the past year in AI, and feels like an entry into the history books of what happened in 2025. The story is balanced and addresses both advancements and challenges, from data centers to mental health crises.

However, its language still reinforces that AI is here to stay. It frames this small group of leaders as authoritative visionaries, guiding the world through this inevitably and out of control moment. The piece ends: “Thanks to Huang, Son, Altman, and other AI titans, humanity is now flying down the highway, all gas no brakes, toward a highly automated and highly uncertain future.”

Even though the article isn’t written by the tech leaders themselves, it cements their dominant narrative of AI into the story of 2025: that it’s barrelling forward, with no regard for concerns nor a concrete answer on what this new future will look like.

That narrative is not new; it was intentionally built and sustained by the tech companies over the past few years in order to convince us that their new and life-altering product is essential and inevitable. As Cohn urges feminists concerned about nuclear war to “give careful attention to the language we choose to use—whom it allows us to communicate with and what it allows us to think as well as say,” the same question applies here—what do the words we are given allow us to think?

The Vocabulary

Frontier hype words like breakthrough, revolutionize, and milestone emphasize speed and inevitability, driving momentum and contributing to the notion of an “AI race.” They feel auspicious and optimistic, but can also come across as sensationalist and empty when used unsparingly. Words like those can even sound so intangible that they mirror the flattened language produced by large language models, which often generate words whose meaning you cannot “see” when reading them.

Just skim through this Google blog post. Yes, the technological advancements are pretty cool, but the words felt like they were begging me to believe them. Transformative, unprecedented, empower, advance, enable, deliver, power, capable, lead.

In order to become an essential component in all aspects of human life, AI must have this sense of urgency, snowballing towards a (singular) future without giving the individual enough time to catch up. These are all active words that remind us that other people (and the AI models themselves) are working towards a better future and that we should be too.

AI companies must convince us that AI can and will solve all of our inconveniences. This makes opting out seem like just a personal failure to use the available tools. AI is the new norm, and it’s an individual’s fault if they don’t keep up (yet another familiar narrative).

However, these breakthrough words must be paired with more concrete words to convince us that this future is already here, and that it’s not just a distant fix for all our problems. These words work to reduce fear of the unknown and make adoption feel achievable.

Practical words like implement, roll out, integrate, automate, simplify, demo, guide, and toolkit, combined with temporal terms like last month or early next year all position AI as digestible and manageable, even if the technology isn’t fully realized.

There’s also adjectives that bolster AI’s intelligence and respectability. AI is rational, decision-making, independent, logical, generative, rational, complex, agentic, purposeful. These words draw disproportionately on traits historically coded as masculine and authoritative.

Words like agent make AI appear proactive; words like assistant or copilot make it appear helpful. Words like superintelligence and neural network connect the technology to humans, making it more understandable and even respectable. Human names like Alexa anthropomorphize it.

And it’s not just specific words; broader storytelling techniques work to subconsciously associate AI with positive attributes. The AI leaders use metaphors that draw on our human need for survival. AI pioneer Andrew Ng and Google CEO Sundar Pichai have compared AI to electricity and fire, insisting that it will be equally as impactful on humanity. Every industry, Andrew claimed, will be transformed by, and ultimately rely on, AI, just like they do electricity.

Using language to sell something is a time-old marketing tool. But for AI, these linguistic manipulations feel like just that—the powerful and confident words don’t align with our lived experiences.

A Reminder of Control

There’s a growing dissonance between how AI is described by the tech companies, how it is experienced, and how it is culturally absorbed. The more aggressively tech leaders insist on AI as essential and good, the more visible resistance becomes.

Even as AI is framed as empowering and hopeful, we still experience it as abstract, imposed, and politically charged. The positive impacts of these technologies have yet to regularly impact our everyday lives, creating a disconnect between how the companies discuss AI and how we experience it.

Beyond the more immediate consequences like losing jobs and diminishing critical thinking, there’s an additional layer of discomfort and anxiety. AI has become a place where hidden power dynamics become explicit, creating a fighting ground for persisting political and class tensions.

AI makes the power of tech leaders, and their ties to politics, impossible to ignore. You can’t talk about AI without talking about regulation, ethics, and control—it’s telling that the TIME article begins and ends with quotes from Donald Trump. Nearly half of Americans think that Trump is carrying out a pro-Big Tech agenda when making tech policies, and does not have the people’s best interests in mind.

AI is also a reminder of the amount of power we have over our own lives. If it can transform our jobs, creativity, and livelihoods all in less than five years, how much control do we actually have? Even if we finally accept and use AI to reclaim agency, it was AI that made us feel out of control in the first place. As we gain false control, the tech leaders cement their actual control.

Every other week, it seems, an AI leader issues a statement about AI’s transformative properties and tangible results for the near future, whether that’s expediting scientific discovery or writing code (in a good way, not a bad way, they say!).

Shortly after those announcements, an AI “godfather” or even some CEOs announce various fears for AI and humanity’s future. Some say they want to slow down AI until we can agree on a more ethical and safe direction. The narrative flips back and forth from extremes of doomerism to hype, in a push and pull between company competition and ethical considerations. They scramble and even compete with each other to tell the right story to win our attention.

We’re at the beginning of a reckoning amongst tech leaders that their narrative and language aren’t working, and that AI needs a rebrand.

They’re abandoning inconsistent and overhyped definitions of AGI; they’re realizing they need to show people how AI can actually improve their lives before they lose them; they’re insisting that people’s skepticism has a detrimental effect on their business. They’re making new metaphors. Even some of the websites got a softer rebrand.

People are also creating their own narratives to fight back. “Slop” was a term not developed by the tech executives. In fact, they don’t really like it.

AI companies earned a captive audience with such rich storytelling, and now they’re losing it. If tech companies want to win them back, they need to think about the broader AI narrative that goes beyond just impressive technological innovation—they need to think about the lived experience, how people resonate with stories, and let AI be a tool that democratizes the imagination and creation of a better future, not shoves one version of it down people’s throats.

With the language they’re using, it may seem life-or-death. The race to AI has even been compared to the race to develop nuclear weapons—recently, Anthropic CEO Dario Amodei likened Trump’s decision to sell AI chips to China to “selling nuclear weapons to North Korea.”

But don’t let their urgent and extremist language fool you. They don’t know where the story is going, and they can’t turn the page until we are all on board. AI could have a lot of societal benefits, from drug discovery to weather prediction. But there needs to be some more collaboration and imagination in creating this technology; it can’t solely come from an isolated billionaire class.

Cohn ends her essay by saying: “Our reconstructive task is a task of creating compelling alternative visions of possible futures, a task of recognizing and developing alternative conceptions of rationality, a task of creating rich and imaginative alternative voices—diverse voices whose conversations with each other will invent those futures.”

Let’s break out of the language created by those tech leaders who balance on the scaffolding. We have so many words, so many metaphors, so many stories that we don’t have to let our voices be constrained or homogenized into the hyped voices of these tech leaders or the single bland voice of an AI chatbot.

Leave a comment